I need to find a fairly efficient way to detect syllables in a word. E.g.,

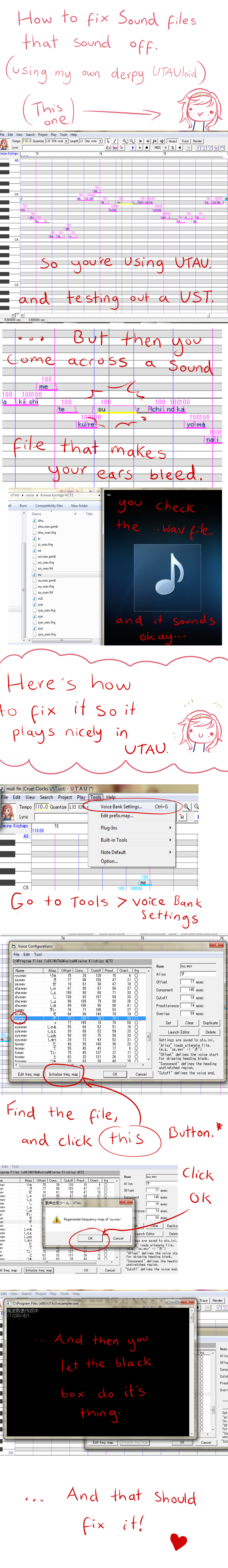

Jul 09, 2012 btw, Ikasama Casino doesn't have any of these problems c: Now that you've got your tempo done, set your tempo on the MIDI program, and it's time to determine the first note. Determining the Notes This is the harder part. Hopefully you have a good ear because otherwise I.

Invisible -> in-vi-sib-le

There are some syllabification rules that could be used:

VCVVCCVCCCVCCCVCVCC

*where V is a vowel and C is a consonant.E.g.,

Pronunciation (5 Pro-nun-ci-a-tion; CV-CVC-CV-V-CVC)

I've tried few methods, among which were using regex (which helps only if you want to count syllables) or hard coded rule definition (a brute force approach which proves to be very inefficient) and finally using a finite state automata (which did not result with anything useful).

The purpose of my application is to create a dictionary of all syllables in a given language. This dictionary will later be used for spell checking applications (using Bayesian classifiers) and text to speech synthesis.

I would appreciate if one could give me tips on an alternate way to solve this problem besides my previous approaches.

I work in Java, but any tip in C/C++, C#, Python, Perl... would work for me.

15 Answers

Read about the TeX approach to this problem for the purposes of hyphenation. Especially see Frank Liang's thesis dissertationWord Hy-phen-a-tion by Com-put-er. His algorithm is very accurate, and then includes a small exceptions dictionary for cases where the algorithm does not work.

I stumbled across this page looking for the same thing, and found a few implementations of the Liang paper here:https://github.com/mnater/hyphenator

That is unless you're the type that enjoys reading a 60 page thesis instead of adapting freely available code for non-unique problem. :)

I'm trying to tackle this problem for a program that will calculate the flesch-kincaid and flesch reading score of a block of text. My algorithm uses what I found on this website: http://www.howmanysyllables.com/howtocountsyllables.html and it gets reasonably close. It still has trouble on complicated words like invisible and hyphenation, but I've found it gets in the ballpark for my purposes.

It has the upside of being easy to implement. I found the 'es' can be either syllabic or not. It's a gamble, but I decided to remove the es in my algorithm.

This is a particularly difficult problem which is not completely solved by the LaTeX hyphenation algorithm. A good summary of some available methods and the challenges involved can be found in the paper Evaluating Automatic Syllabification Algorithms for English (Marchand, Adsett, and Damper 2007).

Thanks Joe Basirico, for sharing your quick and dirty implementation in C#. I've used the big libraries, and they work, but they're usually a bit slow, and for quick projects, your method works fine.

Here is your code in Java, along with test cases:

The result was as expected (it works good enough for Flesch-Kincaid):

Bumping @Tihamer and @joe-basirico. Very useful function, not perfect, but good for most small-to-medium projects. Joe, I have re-written an implementation of your code in Python:

Hope someone finds this useful!

Perl has Lingua::Phonology::Syllable module. You might try that, or try looking into its algorithm. I saw a few other older modules there, too.

I don't understand why a regular expression gives you only a count of syllables. You should be able to get the syllables themselves using capture parentheses. Assuming you can construct a regular expression that works, that is.

Today I found this Java implementation of Frank Liang's hyphenation algorithmn with pattern for English or German, which works quite well and is available on Maven Central.

Cave: It is important to remove the last lines of the .tex pattern files, because otherwise those files can not be loaded with the current version on Maven Central.

To load and use the hyphenator, you can use the following Java code snippet. texTable is the name of the .tex files containing the needed patterns. Those files are available on the project github site.

Afterwards the Hyphenator is ready to use. To detect syllables, the basic idea is to split the term at the provided hyphens.

You need to split on 'u00AD', since the API does not return a normal '-'.

This approach outperforms the answer of Joe Basirico, since it supports many different languages and detects German hyphenation more accurate.

Why calculate it? Every online dictionary has this info. http://dictionary.reference.com/browse/invisiblein·vis·i·ble

Thank you @joe-basirico and @tihamer. I have ported @tihamer's code to Lua 5.1, 5.2 and luajit 2 (most likely will run on other versions of lua as well):

countsyllables.lua

And some fun tests to confirm it works (as much as it's supposed to):

countsyllables.tests.lua

I could not find an adequate way to count syllables, so I designed a method myself.

You can view my method here: https://stackoverflow.com/a/32784041/2734752

I use a combination of a dictionary and algorithm method to count syllables.

You can view my library here: https://github.com/troywatson/Lawrence-Style-Checker

I just tested my algorithm and had a 99.4% strike rate!

Output:

I ran into this exact same issue a little while ago.

I ended up using the CMU Pronunciation Dictionary for quick and accurate lookups of most words. For words not in the dictionary, I fell back to a machine learning model that's ~98% accurate at predicting syllable counts.

I wrapped the whole thing up in an easy-to-use python module here: https://github.com/repp/big-phoney

Install:pip install big-phoney

Count Syllables:

If you're not using Python and you want to try the ML-model-based approach, I did a pretty detailed write up on how the syllable counting model works on Kaggle.

After doing a lot of testing and trying out hyphenation packages as well, I wrote my own based on a number of examples. I also tried the pyhyphen and pyphen packages that interfaces with hyphenation dictionaries, but they produce the wrong number of syllables in many cases. The nltk package was simply too slow for this use case.

My implementation in Python is part of a class i wrote, and the syllable counting routine is pasted below. It over-estimates the number of syllables a bit as I still haven't found a good way to account for silent word endings.

The function returns the ratio of syllables per word as it is used for a Flesch-Kincaid readability score. The number doesn't have to be exact, just close enough for an estimate.

On my 7th generation i7 CPU, this function took 1.1-1.2 milliseconds for a 759 word sample text.

I used jsoup to do this once. Here's a sample syllable parser:

Not the answer you're looking for? Browse other questions tagged nlpspell-checkinghyphenation or ask your own question.

Japanese/English CV Reclista

i

e

u

o

ah

ih

uh

ai

ei

oi

ka

ki

ke

ku

ko

kwa

kya

kyo

kyu

kah

kih

kuh

kai

kei

koi

s

sa

si

se

su

so

sah

sih

suh

sai

sei

soi

sh

sha

shi

she

shu

sho

shya

shyu

shyo

shah

shih

shuh

shai

shei

shoi

ta

ti

te

tu

to

tah

tih

tuh

tai

tei

toi

ts

tsa

tsi

tse

tsu

tso

tsah

tsih

tsuh

tsai

tsei

tsoi

ch

cha

chi

che

chu

cho

chah

chih

chuh

chai

chei

choi

n

na

ni

ne

nu

no

nya

nyo

nyu

nah

nih

nuh

nai

nei

noi

ha

hi

he

hu

ho

hya

hyo

hyu

hah

hih

huh

hai

hei

hoi

f

fa

fi

fe

fu

fo

fah

fih

fuh

fai

fei

foi

m

ma

mi

me

mu

mo

mya

myo

myu

mah

mih

muh

mai

mei

moi

mou

ya

yi

ye

yu

yo

yah

yih

yuh

yai

yei

yoi

rl

ra

ri

re

ru

ro

rya

rye

ryo

ryu

rah

rih

ruh

rai

rei

roi

l

la

li

le

lu

lo

lah

lih

luh

lai

lei

loi

er

rra

rri

rre

rru

rro

rrya

rrye

rryo

rryu

rrah

rreh

rrih

rrai

rrei

rroi

wa

wi

we

wu

wo

wah

wih

wuh

wai

wei

woi

ga

gi

ge

gu

go

gwa

gya

gye

gyu

gyo

gah

gih

guh

gai

gei

goi

j

ja

ji

je

ju

jo

jya

jyu

jyo

jah

jih

juh

jai

jei

joi

z

za

zi

ze

zu

zo

zwa

zya

zyo

zyu

zah

zih

zuh

zai

zei

zoi

(These are the 'th' sounds in 'ther